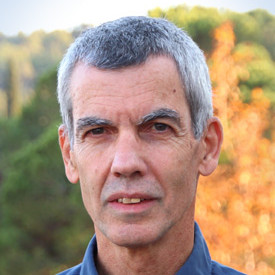

Hello

I'm Dmitry Kuznichov

ML Applied Researcher

Highly educated and experienced machine learning and computer vision expert with a passion for problem-solving. I received my M.Sc. in Computer Science from Technion, where I focused on improving the convergence rate of multigrid algorithms using neural networks. My thesis received a grade of 93, and my overall GPA was 91.9. Prior to this, I completed two B.Sc. degrees in Applied Mathematics and Electrical and Electronic Engineering, both with high honors. My GPA for both of these degrees was above 94.

Throughout my career, I have worked on a variety of projects, including face recognition misidentification analysis, depth-RGB stereo synthetic image dataset creation, and data augmentation for leaf segmentation and counting tasks in rosette plants. I have also served as a teaching assistant for Infinitesimal Calculus and as a machine learning researcher for the Phenomics Consortium at Technion. In addition to my professional experience, I have a strong background in programming languages such as Python, Matlab, and C/C++, and am proficient in tools such as Tensorflow, Pytorch, and OpenCV.

Outside of work, I enjoy orienteering, hiking, sailing, and diving. I am also a veteran of the Israeli Defense Forces, where I served as a combat medic and squad sergeant in the Special Forces Battalion. I am now seeking the next challenge in my career.

Papers

Deep learning techniques involving image processing and data analysis are constantly evolving. Many domains adapt these techniques for object segmentation, instantiation and classification. Recently, agricultural industries adopted those techniques in order to bring automation to farmers around the globe. One analysis procedure required for automatic visual inspection in this domain is leaf count and segmentation. Collecting labeled data from field crops and greenhouses is a complicated task due to the large variety of crops, growth seasons, climate changes, phenotype diversity, and more, especially, when specific learning tasks require a large amount of labeled data for training. Data augmentation for training deep neural networks is well established, examples include data synthesis, using generative semi-synthetic models, and applying various kinds of transformations. In this paper we propose a data augmentation method that preserves the geometric structure of the data objects, thus keeping the physical appearance of the data-set as close as possible to imaged plants in real agricultural scenes. The proposed method provides state of the art results when applied to the standard benchmark in the field, namely, the ongoing Leaf Segmentation Challenge hosted by Computer Vision Problems in Plant Phenotyping.

Cited by

During the last decade, Neural Networks (NNs) have proved to be extremely effective tools in many fields of engineering, including autonomous vehicles, medical diagnosis and search engines, and even in art creation. Indeed, NNs often decisively outperform traditional algorithms. One area that is only recently attracting significant interest is using NNs for designing numerical solvers, particularly for discretized partial differential equations. Several recent papers have considered employing NNs for developing multigrid methods, which are a leading computational tool for solving discretized partial differential equations and other sparse-matrix problems. We extend these new ideas, focusing on so-called relaxation operators (also called smoothers), which are an important component of the multigrid algorithm that has not yet received much attention in this context. We explore an approach for using NNs to learn relaxation parameters for an ensemble of diffusion operators with random coefficients, for Jacobi type smoothers and for 4Color GaussSeidel smoothers. The latter yield exceptionally efficient and easy to parallelize Successive Over Relaxation (SOR) smoothers. Moreover, this work demonstrates that learning relaxation parameters on relatively small grids using a two-grid method and Gelfand's formula as a loss function can be implemented easily. These methods efficiently produce nearly-optimal parameters, thereby significantly improving the convergence rate of multigrid algorithms on large grids.